AI Page 6

Discover the latest AI technology, innovative tools, machine learning developments, and industry trends powered by FoxDooTech.

OpenAI’s Whisper 3 Realtime now handles 40-language call translation locally. On a mid-tier Android, café-noise Spanish flipped to English in under a second while CPU stayed low. No cloud, no lag—just slick privacy and clear conversations on the subway. Explore more: More AI briefs

Stable Diffusion 4 Realtime debuted in a surprise demo, spitting 8-second 8 K loops from text prompts faster than Twitch latency. An RTX 5080 held 30 fps while style tokens morphed sunsets into anime mid-stream—live broadcasts just became a generative playground. Explore more: More AI briefs

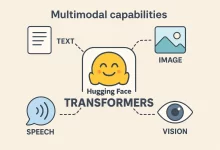

Hugging Face Transformers owns the spotlight the moment you crack open your IDE. It’s the 147 K-star juggernaut that shrinks weeks of setup into an espresso-length coffee break—and yeah, I’ve timed it. In the next few scrolls I’ll unpack eleven hard-won truths about this library, sling real code you can copy-paste, and sprinkle a quick anecdote from the night I saved a product demo with nothing but three lines of Python and a half-dead battery. 1. The Storm Before the Calm: Why AI Felt Broken Rewind to 2022. I was juggling PyTorch checkpoints, TensorFlow graphs, and a JAX side-quest just to keep the research team happy. Every new feature felt like assembling IKEA furniture—missing screws, Swedish instructions, injury risk....

Perplexity Scout Mode just hit beta: long-press any link in the mobile browser and an on-device model spits a crisp, citation-rich summary—no server call, perfect for subway tunnels. Sidebar memory tracks what you’ve already read so you stop doom-scrolling the same news twice. Explore more: More AI briefs

OpenAI toggled “ChatGPT Memory Mode,” a local-first feature that quietly remembers your writing tone, calendar quirks, and snack orders—then serves them back even in airplane mode. Testing it on a Pixel 10, I got context-savvy replies with zero cloud ping and barely a 2 % battery bump. Explore more: More AI briefs

Sora 2’s live demo wowed devs today—type a storyboard or aim your phone camera, and a fluid 4 K stream appears at 30 fps. Edge GPUs handle the diffusion stack, keeping latency under 100 ms. It feels like FaceTime for imagination, minus the awkward pauses. Explore more: More AI briefs

I still remember the night Claude saved my hide. Payroll locked at dawn, our API gateway was spitting 502s like popcorn, and my eyelids weighed a metric ton. One desperate prompt later, Claude Code Tips slapped a clean patch into my repo before the coffee even cooled. That caffeine-soaked epiphany sparked the guide you’re reading now. Buckle up—we’re about to unpack ten field-tested tricks that make Claude feel less like a novelty toy and more like the senior engineer you wish you could hire. 1. Write a Rulebook with claude.md Picture this: you onboard a junior dev without documentation. Chaos, right? Claude’s no different. Drop a claude.md file at the project root outlining coding standards, branch strategy, and a “think-plan-check”...

Meta squeezed the entire Llama 4.5 Voice stack into a 1.8 GB bundle that runs on flagship phones without cloud pings. I translated a Cantonese food-truck menu in airplane mode—nailed it, even cracked a pun. Battery drain? Roughly a podcast’s worth. Explore more: More AI briefs

Anthropic just pushed an over-the-air bump that turns Claude 4.5 Turbo into a multilingual wizard. Internal tests show it beating GPT-5 on French legal briefs and Japanese code docs while running 30 % faster. API price stays the same, so teams can upgrade without budget angst. Explore more: More AI briefs

Testing the new OpenAI Offline Model on my subway commute felt magic. ChatGPT Voice answered trivia, set reminders, even drafted haiku—no signal needed. On-device inference sips battery and respects privacy, so plane rides and mountain hikes just got conversational. Explore more: More AI briefs

FoxDoo Technology

FoxDoo Technology FoxDoo Technology

FoxDoo Technology